Research Computing Teams - Research Infrastructure Funding Stories and Link Roundup, 4 Dec 2020

Hi, everyone:

There were two big stories in the news this week about what's possible with sustained research infrastructure funding and what happens when research infrastructure isn't sustained.

In the first, you've probably read about AlphaFold, Google Brain's efforts to bring deep learning to protein folding. It did very well in the 14th annual Critical Assessment of (protein) Structure Prediction (CASP) contest. Predictably but unfortunately, Google's press releases wildly overhyped the results - "Protein Folding Solved".

Most proteins fold very robustly in the chaotic environment of the cell, and so it's expected that there should be complex features that predict how the proteins folded configurations look. We still don't know anything about the model AlphaFold used - other than it did very well on these 100 proteins - or how it was trained. There are a lot of questions of how it will work with more poorly behaved proteins - a wrong confident prediction could be much worse than no prediction. But it did get very good results, and with a very small amount of computational time to actually make the predictions. That raises a lot of hope for the scope of near-term future advances.

But as Aled Edwards points out on twitter, the real story here is one of long term, multi-decadal, investment in research infrastructure including research data infrastructure by the structural biology community. The protein data bank was set up 50 years ago (!!); and a culture of data sharing of these laboriously solved protein structures was set up, with a norm of contributing to (and helping curate) the data bank. That databank has been continuously curated and maintained, new techniques developed, eventually leading to the massive database now on which methods can be trained and results compared.

It's the sustained funding and support - monetarily but also in terms of aligning research incentives like credit - which built the PDB. The other big story we heard this week tells us that you can't just fund a piece of infrastructure, walk away, and expect the result to be self-sustaining. On December 1st, the iconic Arecibo Radio Telescope in Puerto Rico collapsed. The telescope was considered important enough to keep running - there was no move to decommission it until late November - but not important enough to keep funding the maintenance to keep it functioning.

Digital research infrastructure - software, data resources, computing systems - fall apart at least as quickly without ongoing funded effort to maintain them. These digital pieces of infrastructure aren't "sustainable" or not; they are sustained, or not. And too many critical pieces of our digital research infrastructure are not being sustained.

In the coming year, this newsletter will be spending some time giving research computing team managers the tools they need to make it as easy as possible for funders and adminstrators to make the right decisions and sustain our work.

For now, on to the link roundup!

Managing Teams

The Power of Performance Reviews: Use This System to Become a Better Manager - Lenny Rachitsky, First Round Review

In one-on-ones, there should always be time to touch base on bigger picture items - career goals, finding out what your team members what to focus on, etc. But it's good to have routine longer meetings taking a look back at the past months, and ahead to the next months, outside of the weekly cycle.

Since it's end of year, there's lots of management articles about annual performance reviews. I think both annual and performance are wrong here - annual is too seldom, and focussing on performance is a mistake. This is a good article on what's good about this kind of meeting, though.

These can be really powerful ways to let your team members know what they've done that's really valuable, to get aligned on what's coming next, and talk about longer-term goals. In our own team we do them 3-4 times a year and I've found it works really well. We don't rate performance or give scores - we look back on the past few months, note the team members accomplishments, compare them against the goals set, and then plan for the few months ahead. At least some team members quite like them and look forward to them - although initially there was some apprehension - and it's a straightforward way to make sure you both have the same views about the future.

Managing Your Own Career

How to deprioritize tasks, projects, and plans (without feeling like you’re ‘throwing away’ your time and effort) - Jory MacKay, RescueTime

Focus is all about not doing things - which is tough in a research environment when there are so many interesting and valuable things that you could be doing! MacKay's article summarizes some good strategies for not doing the right things.

- Timeboxing - Set limits on how long you’ll work on a task

- Create a ‘not to do’ list

- Use a weekly review to reassess your priorities

- Isolate only the most impactful elements of important tasks

- Ask your team, clients, or boss what they think is most important

Product Management and Working with Research Communities

Covid: Researchers fear cancer advances delay due to pandemic - BBC

A survey of more than 200 scientists at the Institute of Cancer Research suggested research could be delayed by six months, due to factors including the first lockdown and capacity limits

It's worth emphasizing again that many of the researchers we support, even those who are who are doing pretty well now have fallen well behind where they would been. There may be opportunities to help them catch back up and take more of a collaborative role than just service oriented; and of those opportunities, some can be new services offered routinely in the future to other researchers.

Peer Review: Implementing a "publish, then review" model of publishing - Michael B Eisen et al., eLife

This is an exciting development - starting July 2021, the journal eLife will only consider manuscripts published as preprints for publication. Journal submission will mainly consist of a link to bioRxiv or medRxiv preprints. As part of review, reviews will also be published when the article is published.

Cool Research Computing Projects

Software-based targeted nanopore sequencing with UNCALLED - Sam Kovaka, Nature Bioengineering Behind The Paper

Targeted nanopore sequencing by real-time mapping of raw electrical signal with UNCALLED - Sam Kovaka, Yunfan Fan, Bohan Ni, Winston Timp & Michael C. Schatz

A nice story about how a class project turned into a first-author paper for PhD student Sam Kovaka - involving real-time analysis of data coming out from an Oxford Nanopore sequencer allowing selective sequencing - the nanopore can "spit out" sequences that have been rejected. This allows the sequencer to focus just on the sequences of interest - i.e. one kind of bacteria or not another, or even panels of human genes.

It's really nice work combining signal processing, string data structures, and even writing a data simulator.

Research Software Development

High level overview of how Australian Research Data Commons is viewing Research Software as a First Class Object - Tom Honeyman on Twitter

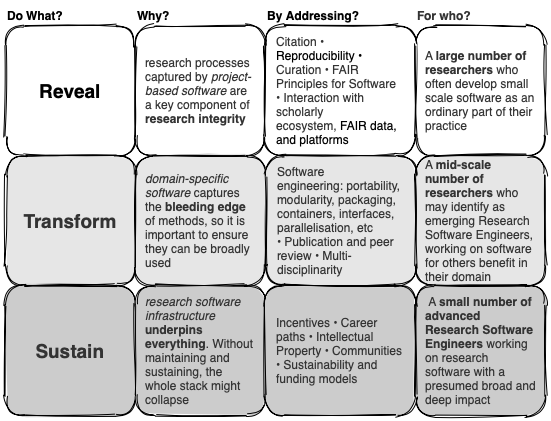

This is a really interesting diagram of how ARDC is thinking of research software:

Here's a preview of what we're thinking (high level) for a national agenda for #researchsoftware as a first class object @ARDC_AU. Feedback welcome pic.twitter.com/XtfwhK48DN

— Tom Honeyman (@TomHoneyman3) November 30, 2020

The approach is I think the right one, and one I've advocated before; taking a path-to-maturity model approach, where the levels are (in their terms, with my interpretations):

- Reveal: supporting methods development - making sure the code that goes with a paper gets on github (or wherever), is documented and useable, etc. The "Long tail" of research software development

- Transform: Turning that initial code into something that can be run by others

- Sustain: Turning that code into research infrastructure itself - keeping it maintained, widely useable.

I think of this as "proof of concept; prototype; production". They're very different stages. More controversially, I think of only the first, proof of concept, as actually being a research output; prototype and production are about turning that research output into a research input.

How to Make Your Code Reviewer Fall in Love with You - Michael Lynch

A nice article outlining how to write PRs to make them as easy review as possible - making them easier to approve. Good for individuals working on open source projects and for teams working together.

There are 13 steps there, but several I think deserve calling out:

- Review your own code first - go through the code with a reviewer's eyes

- Answer questions with the code itself - if questions come up, don't just answer them but preempt future readers from having the same questions by clarifying the code or adding comments to address the question

- Separate functional and non-functional changes - don't let something that changes behaviour get buried in a refactoring "while you were there"

- Artfully solicit missing information - "what would you suggest as a better approach"?

- Award all ties to the reviewer

Bringing software to the limelight in the Research Data Alliance - Software Heritage

One of the themes of this newsletter is that research computing systems, research software, and research data management are inextricably intertwined. While individual teams obviously focus on one part of the ecosystem, looking funding, policy, or staffing at any higher level, even within an institution or faculty just can't succeed long term if it's considering the different components individually.

This is an example of how organizations with quite different focuses are working together to provide more of that integrated view. Research data is increasingly preserved with the scripts needed to analyze and process the data; but the metadata needs for discovery and reusability of software are different than those of data. So the Software Heritage organization is working increasingly closely with the Research Data Alliance to connect those dots.

Research Computing Systems

How to apologize for server outages and keep users happy - Adam Fowler, Tech Target

When AWS has an outage, it's in the news and they publish public retrospectives (and here's a great blog post of the retrospective of the Kinesis incident this week).

Our downtimes and failures don't make the news, but we owe at least that same level of transparency and communication to our researchers. The technical details will differ from case to case. But what's also needed is an apology, and some clear message that the team has learned something from the outage to make it less likely to recur. Fowler outlines what's needed for such an apology:

- Acknowledgement of what you're apologizing for,

- Empathy for the inconvenience the researchers experienced as a result, and

- Resolution - what the fixes are.

Events: Conferences, Training

From experimental software to research infrastructure maturity - 7 Dec 14:30 – 15:00 UTC, SORSE Talk, Carsten Thiel

In issue 49 we talked about the EOSC maturity checklist for software to be installed on the EOSC cloud; this talk by Theil covers the "why" of this approach and gives more details about the "what".

HPC for Data Science video lecture series - Support session Monday 14 Dec; Warwick University

Because of COVID, this lecture series is not only online but asynchronous and work-at-your-own-pace; but there is a support session where you can get help. There's a nice mix of videos, PDF notes, and exercises. This mix of at-your-own-pace and video synchronous office hours could be a very useful model for other groups.

Random

A very efficient compressed read-only file system for data with significant redundancy - dwarfs.

It's not a super common use case, but if you're supporting research groups which are actively curating a dataset and who want to be able to review changes, roll back, etc, there are several "databases, but with git-like version control" out there now that may be of interest. This New Stack article discusses TerminusDB, Dolt, and and others.

Really cool to see AWS's Scalable Reliable Datagram for Elastic Fabric Adaptor - high performance low-latency network communications - first publicly rolled out for HPC use cases, now being used to connect high-IO nodes to EBS volumes.

A talk extolling the virtues of a text-based markup format for publishing that's ubiquitous in tech - even though it's a little old now, nothing's ever really surpassed it. I'm speaking, of course, about troff.

Everyone's heard about this by now - Mac minis on AWS (not the M1s yet). What's cool is they use AWS's nitro, so you have bare metal access but can still, e.g., mount EBS volumes.

A comprehensive guide to bash parameter substitution - including default handling, pattern removal, find/replace, etc.

Finally, a gopher browser for the Nintendo Switch.

More reasons than you expect that SELECT * is bad for performance.

That’s it…

And that’s it for another week. Let me know what you thought, or if you have anything you’d like to share about the newsletter or management. Just email me or reply to this newsletter if you get it in your inbox.

Have a great weekend, and good luck in the coming week with your research computing team,

Jonathan

Jobs Leading Research Computing Teams

Highlights below; full listing available on the job board.

R&D Master and Reference Data Director - AstraZeneca, Gothenburg SE or Cambridge UK

Own the development of the Master and Reference Data Governance Framework for the in scope subject area(s) and supply to development of the business area goals and roadmap.

Build, own, curate and lead the pipeline for reference data requests (crowdsourced + strategic priorities) on priority base approach and in alignment with existing/emerging data use cases.

Apply deep domain expertise to drive solutions to complex business issues and steer the triage and demand routing to the appropriate senior partners to influence strategies.

Lead the direction of and handle Master and Reference Data forums and multi-functional forums

Define, manage the reporting on performance of Master and Reference data processes, standards, quality and compliance within AZ functions and act upon outcomes.

Manager, Research Cloud Development and Operations - University of Melbourne, Melbourne Victoria AU

The Nectar Research Cloud is powered by OpenStack and provides computing resources to researchers across Australia. We are looking for an exceptional person to lead the operations, maintenance, expansion and continual improvement of the Nectar Research Cloud services and manage a diverse team of expert cloud DevOps engineers. The successful candidate should be passionate about the ongoing DevOps practice and running the large distributed system that is the Nectar Research Cloud, enabling the next generation of research capabilities across many research disciplines. The position will liaise with the national Research Cloud node partner operators at remote sites and coordinate their operations.

Director, Data Platforms - IA Financial Group, Québec QC or Montreal QC or Toronto ON CA

As Director of Data Platforms, you will be a key player in the realization of the company's digital ambition. You support our strategy to deliver the data-driven shift through various data distribution platforms and consumption and visualization tools. You lead a multidisciplinary team of information technology experts. You work closely with the different sectors of the company and a network of strategic partners. You ensure that our platforms meet industry best practices and are able to keep pace with the growing needs of the company. Since the use of data is constantly evolving, your curiosity allows you to stay abreast of the latest trends.

Senior Technical Coordinator & Special Projects, Data Analytics, Reporting & Evaluation - Public Health Services Authority BC, Vancouver BC CA

Working in collaboration with other members of the DARE team, the Senior Technical Coordinator and Special Projects is responsible for providing expertise in coordination, implementation and maintenance of the DARE technical, data governance and analytical teams’ projects activities. The Senior Technical Coordinator and Special Projects works collaboratively with members of the DARE data governance and architecture team and multiple other analytical teams across PHSA, other health authorities, and MoH in the planning, implementation and evaluation of DARE or other provincial initiatives. This position will provide consultation, guidance and support to designated project staff, contractors and stakeholders. Using LEAN management principles, this position will identify opportunities for efficiencies and quality improvement and present opportunities to foster a collaborative environment to implement changes. This role may require travel at times to facilitate face to face engagement with stakeholders to provide support in planning and implementing projects.

Manager, Data Management - BCI, Vancouver or Victoria BC CA

Reporting to the Director, Data & Analytics, the Manager, Data Management is responsible for providing technical leadership and ensuring data management and data governance services are developed and delivered with high levels of quality and performance. S/he will build and manage an experienced team of data specialists, and will work closely with other teams in an Agile hybrid environment. S/he will facilitate continuous development across the team and in partnership with others, and ensures that BCI’s valuable data assets are used to achieve business goals and objectives

Research Computing Product Owner - Imperial College London, London UK

Reporting in to the Head of Products in ICT for line management, to lead and develop the Research Computing Product Line with the aim of providing a cost effective and sustainable service that is responsive to the needs of the academic community.

The Product Owner will agree priorities and backlog prioritisation with the research community (specifically the Director of Research Computing).

The role owns a dedicated group of applications under a Research Computing product line, aligned to a set of business process or function of the College, which covers the full product lifecycle of management including strategic technology roadmap, technology selection, release of new products, change activity on current products, ongoing support for products, technical debt and retirement of sunset products.

The Research Computing Product Owner leads the executive and senior stakeholder engagement with customers of the Research Computing Product Line, ensuring the product set meets the current and future needs of the business and aligns with the business plan of the Department, Faculty or function.

Senior Scientist, Computational Biologist – Biomedical Imaging Data - Sage Bionetworks, Remote until July 2021; Seattle WA USA

Sage Bionetworks is currently recruiting for a senior computational biologist with a background in cancer research. This position presents the opportunity to support cancer research teams that are using imaging assays by increasing their use of systems for validating, visualizing and annotating biomedical images. The position will be responsible for leading interaction with researchers to develop standardized cancer data repositories in collaboration with clinicians, biologists, and computational biologists in academia and industry. The work is inherently collaborative; the position will work closely with scientists and engineers inside Sage and with external researchers.

Senior Bioinformatics Engineer – Informatics Infrastructure - Sage Bionetworks, Remote until July; Seattle WA USA

As a member of our Informatics & Biocomputing team, you’ll work closely with scientists and engineers to support research projects in cancer and other genetic diseases. The work includes prototyping and evaluating solutions to support the harmonization and sharing of biomedical data (e.g., genomic, clinical, and imaging data). You’ll build systems to facilitate storage, management, and analysis of data via cloud, workflow, and containerization technologies. Ideal candidates will be enthusiastic about developing applications, processes, and standards that enable open, collaborative, and reproducible biomedical research.

Modeling and Simulation Manager - Rockport Networks, Ottawa ON CA

As the Modeling and Simulation Manager, you will play a key role in Rockport’s success through your contributions managing the network team throughout the analysis and development of Rockport’s innovative software solutions using the latest technologies in a fast-paced and growing environment. Reporting to the Vice President of Systems Engineering, you will lead the development of models and simulations tools for various Rockport’s network designs.

Manage a small Networking team to advance modelling and simulation capabilities of a novel networking technology. Work closely with product owners, engineers, and architects to develop the best technical solutions. Evaluate and compare needed frameworks, libraries, and tools Validate implementations and predict performance in large-scale deployments.